Posters Presentations

-

Full Access

Full Access

-

Onsite Student Access

Onsite Student Access

-

Onsite Experience

Onsite Experience

-

Virtual Full Access

Virtual Full Access

-

Virtual Basic Access

Virtual Basic Access

*All presentations are available in the virtual platform on-demand. The posters will also be exhibited onsite in Hall E, Tokyo International Forum from 15 – 17 December 2021.

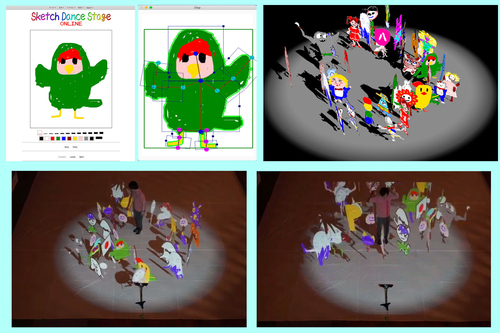

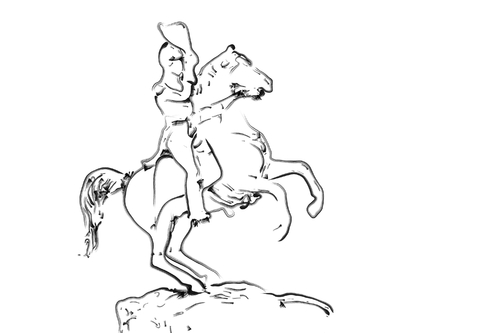

"Sketch Dance Stage Online": Three-dimensional CG projection of hand-drawn characters to real space and interaction

Abstract: We introduce an interactive content "Sketch Dance Stage Online". A character drawn on the web page appears three-dimensionally in the remote real space while dancing.

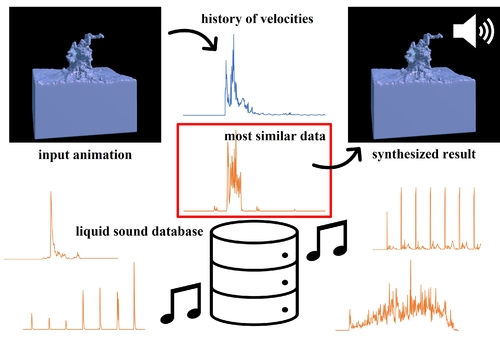

A Liquid Sound Retrieval using History of Velocities in Physically-based Simulation

Abstract: We propose a data-driven method for synthesizing sound effects for liquid animations. Our method achieves fast synthesis of liquid sound for simulated liquid motion, once the database is prepared.

A Procedural MatCap System for Cel-Shaded Japanese Animation Production

Abstract: We present a procedural MatCap shading system specifically developed for the production of Japanese cel-shaded animations. We discuss the system design based on the practices in a Japanese animation studio.

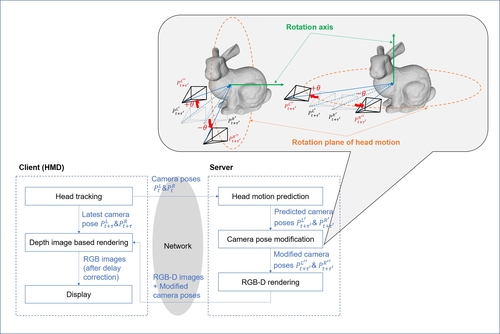

A Robust Display Delay Compensation Technique Considering User's Head Motion Direction for Cloud XR

Abstract: We propose a novel rendering framework for cloud XR, where the server renders RGB-D images with the optimally arranged rendering viewpoints, and the client applies DIBR to compensate for M2PL.

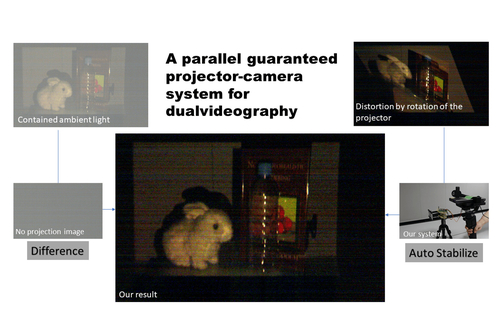

A parallel guaranteed projector-camera system for dual videography

Abstract: We propose a method to mitigate the two limitations of O'Toole et al.'s dual videography: the "imaging system limitation" and the "imaging environment limitation".

ARMixer: Live Stage Monitor Mixing through Gestural Interaction in Augmented Reality

Abstract: ARMixer is a system that allows musicians to perform an in-situ self-stage monitor mixing through gestures in augmented reality and provides a real-time and intuitive mixing experience.

ARinVR: Bringing Mobile AR into VR

Abstract: This paper presents ARinVR, a novel concept that seamlessly and synchronously integrates handheld mobile AR and head-worn VR spaces to allow users to see AR scenarios in a virtual environment.

An Abstract Drawing Method for Same Shaped but Densely Arranged Many Objects

Abstract: We propose a hierarchical abstract drawing method for many 3D objects which are densely arranged and have almost the same shape.

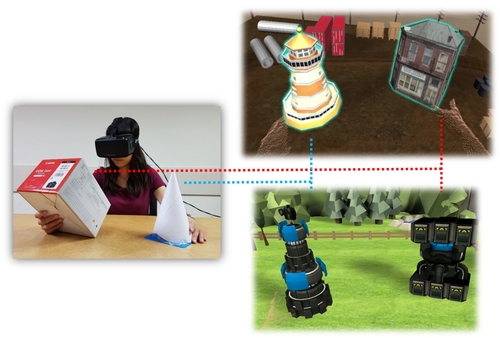

Associating Real Objects with Virtual Models for VR Interaction

Abstract: This work associates real objects with virtual models. A user can interact with these real objects when she or he is immersed in the virtual environment.

BeeWave : Create swarm kinetic movement using by SMA display and embedded cellular automata-based mechanism

Abstract: "BeeWave" is a kinetic art sculpture driven by a shaped memory alloy and embedded cellular automata mechanism. It use material properties of shape memory alloy creating a elegant sway motion. The basic motor element can see as triangular cone. The basic kinetic sculpture element can see as a module of triangular cone, which can be assembled into three dimensional sphere shape. The modular system worked as a cellular automaton system, which receive, store and process signals transmitted between the cells through the simple rules, its triggering all the globla behaviors as unpredictable wave cycle.

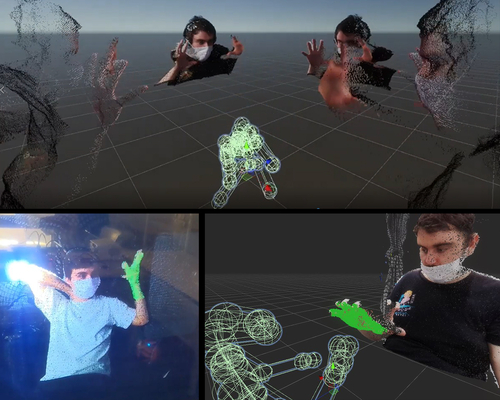

BridgedReality: A Toolkit Connecting Physical and Virtual Spaces through Live Holographic Point Cloud Interaction

Abstract: Bridged Reality is a toolkit that allows users to experience live, bare-body, point cloud data interaction by configuring their own augmented spaces.

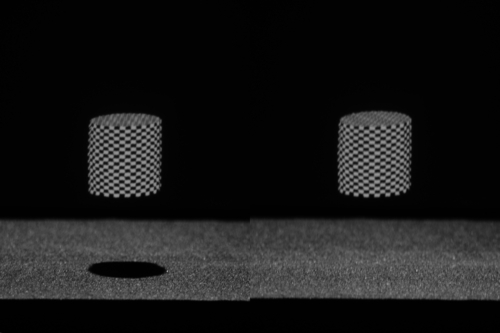

Can Shadows Create a Sense of Depth to Mid-air Image?

Abstract: We investigated the effect of shadows on the perception of the shape of a mid-air image by projecting shadows of different shapes onto a mid-air image.

Cartoon Sliding: An MR System for Experiencing Sliding Down a Cliff

Abstract: We present an MR system for experiencing a cartoon-like event of sliding down a cliff and stopping on the wall, enabled by using wall and knife devices.

Class Balanced Sampling for the Training in GANs

Abstract: We propose class standardized critic score based sample selection which enables class balanced sample selection. Our method achieves improved FID score and Intra-FID score compared to prior Top-k selection.

Decision of Line Structure beyond Junctions Using U-Net-Based CNN for Line Drawing Rendering

Abstract: This paper introduces a new neural network to decide line structure beyond junctions, proposes a method to generate training data for it, and shows the rendering line drawing results.

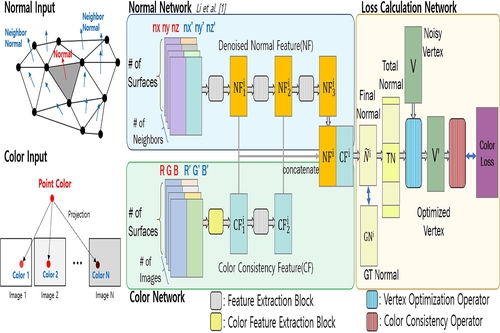

Deep Color-Normal Residual Networks for Geometry Refinement Extracting Color Consistency and Fine Geometry

Abstract: We refine 3D geometry based on color consistency using a deep neural network. Our method optimizes the location of each vertex maximizing the quality of related textures.

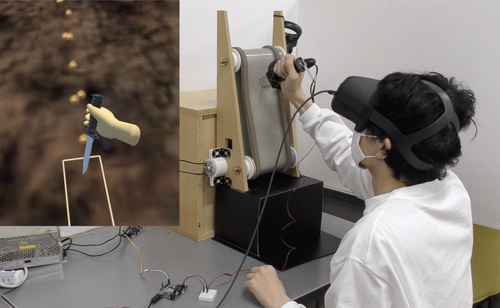

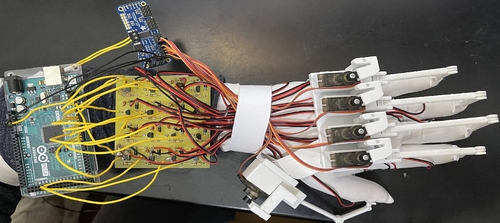

Development of a Wearable Embedded System providing Tactile and Kinesthetic Haptics Feedback for 3D Interactive Applications

Abstract: We present a wearable, lightweight, and affordable embedded system that provides tactile feedback through 15 vibration motors and kinesthetic feedback with an exoskeleton and five servo motors in 3D applications.

Dynamic and Occlusion-Robust Light Field Illumination

Abstract: There is high demand for dynamic and occlusion-robust illumination to improve lighting quality for portrait photography and assembly. Multiple projectors are required for the light field to achieve such illumination. This paper proposes a dynamic and occlusion-robust illumination technique by employing a light field formed by a lens array instead of using multiple projectors. Dynamic illumination is obtained by introducing a feedback system that follows the motion of the object. The designed lens array incorporates a wide viewing angle, making the system robust against occlusion. The proposed system was evaluated through projections onto a dynamic object.

Emotion Guided Speech-Driven Facial Animation

Abstract: We introduce emotion guided speech-driven facial animation, simultaneously proceeding classification and regression from the speech data to generate a controllable level of evident emotional expression on facial animation.

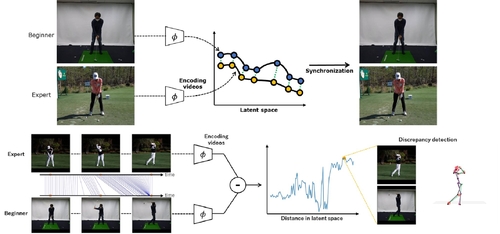

How Can I Swing Like Pro?: Golf Swing Analysis Tool for Self Training

Abstract: We create an application for analyzing and visualizing the discrepancy of two input golf swing motions to help users understand difference between their swings and various experts' swings during self-training.

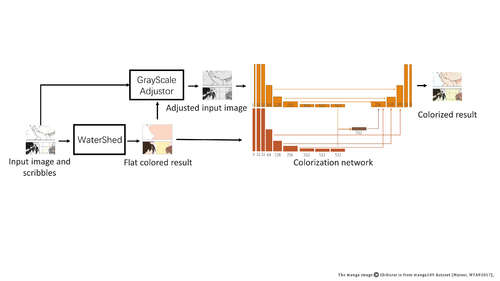

Interactive Manga Colorization with Fast Flat Coloring

Abstract: This paper proposes an interactive semi-automatic colorization system for manga. In our system, users can colorize the monochrome manga images interactively by specifying the desired colors with scribble inputs.

Joint Augmented Reality Video Analytics and Artificial Intelligence Supervision

Abstract: A novel AR application that explores Human-AI interaction in a computer vision context through a provision of an integrated platform for video analytics, AI model fine-tuning.

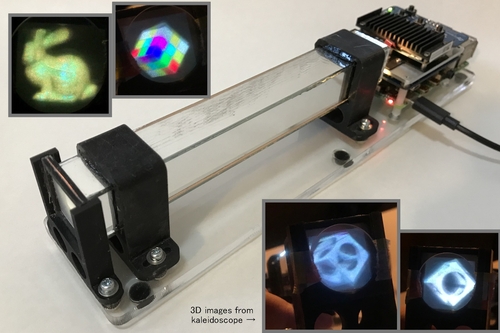

Kaleidoscopic Display: easy-to-make light-field display

Abstract: We propose a 3-dimensional display with kaleidoscope-like optics that creates a virtual multi-projection system. The display is simple, compact, and using a small number of easily available components.

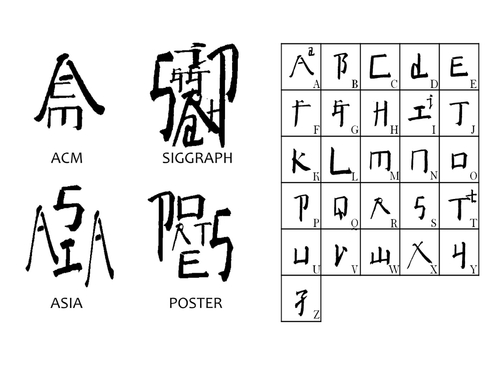

Learning English to Chinese Character: Calligraphic Art Production based on Transformer

Abstract: A new application of artificial intelligence, the transformer-basd assembly method is used to create Square Word Calligraphy.

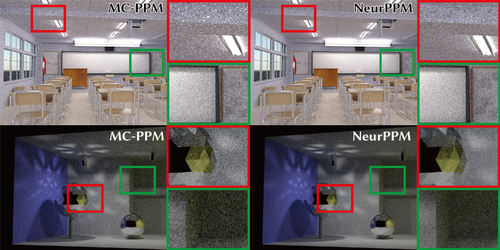

Light Source Selection in Primary-Sample-Space Neural Photon Sampling

Abstract: Our photon sampling is based a normalizing flow that is conditioned by a feature vector representing a light index. The conditioning allows us to represent a discrete probability distribution for light source selection in photon mapping, which is difficult by conventional neural importance sampling without the conditioning.

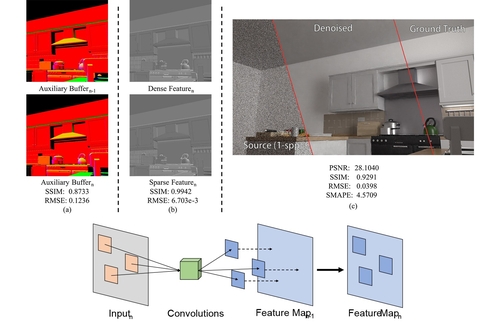

Monte Carlo Denoising with a Sparse Auxiliary Feature Encoder

Abstract: Denoising Monte Carlo path tracing requires utilizing auxiliary buffers efficiently. In this work, we introduce sparse convolutions to the auxiliary buffer encoder, largely reducing its computations without apparent performance drops.

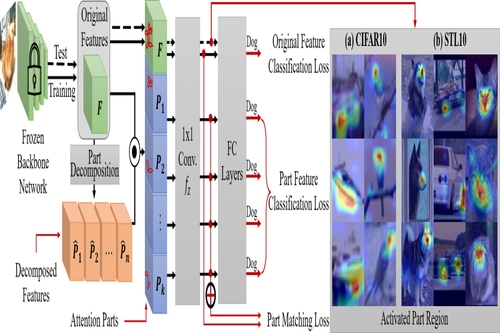

Occlusion Robust Part-aware Object Classification through Part Attention and Redundant Features Suppression

Abstract: We propose a part-aware deep learning approach for partial occlusion robust object classification. We train a network without occluded objects in training time and test the network under partial occlusions.

Optimized binarization for eggshell carving art

Abstract: A tailored objective function for optimizing the binary image for Eggshell carving art.

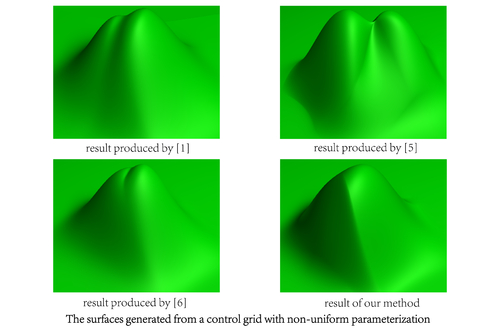

Patching Non-Uniform Extraordinary Points with Sharp Features

Abstract: We extend the non-uniform rational B-spline (NURBS) representation to arbitrary topology. The method can also support non-uniform sharp features along the extraordinary points.

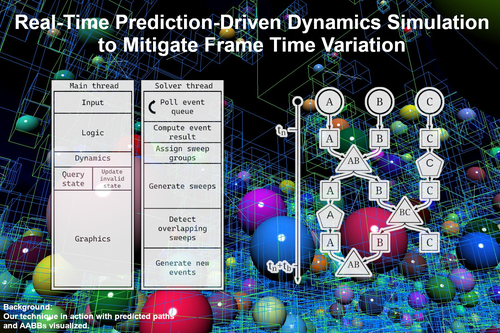

Real-Time Prediction-Driven Dynamics Simulation to Mitigate Frame Time Variation

Abstract: Prediction-driven dynamics method using a graph-based state buffer to minimize the cost of mispredictions for collision detection in real time. It reduces dynamics processing time on the main thread by 2-7x.

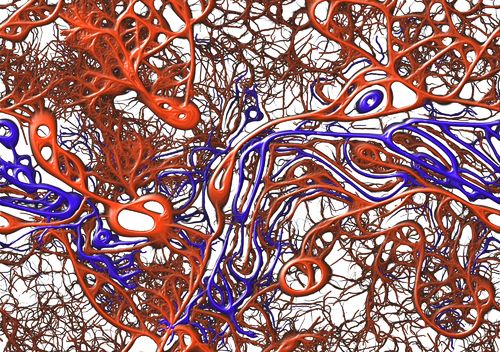

Red Versus Blue: Slime Mold Civil War

Abstract: By modifying the mathematical models of motion of the slime mold Physarum Polycephalum to allow for two competing species, we discover a rich array of patterns.

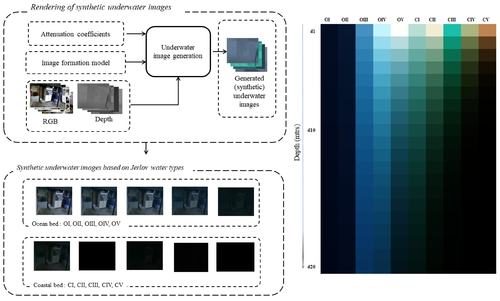

Rendering of Synthetic Underwater Images Towards Restoration

Abstract: We render synthetic underwater images considering revised image formation model, towards learning restoration. Learning degradation of images by modeling absorption and scattering nature of light is motivation of our work.

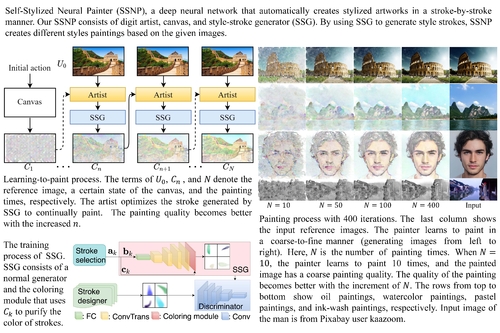

Self-Stylized Neural Painter

Abstract: Self-stylized neural painter (SSNP) uses stylized strokes to create paintings based on input images in a stroke-by-stroke manner. The stroke generator produces stylized strokes mimicking human artists' strokes to improve fidelity. SSNP is able to create diverse art styles of paintings, such as oil painting, watercolor painting, pastel painting, and ink-wash painting.

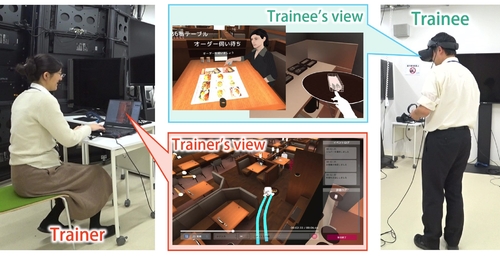

Service Skills Training in Restaurants Using Virtual Reality

Abstract: For improving the effectiveness and quality of training for new employees in a restaurant, we developed a VR training system.

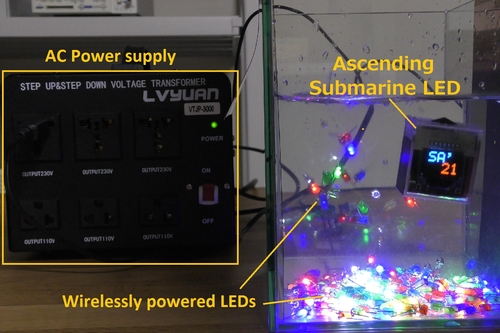

Submarine LED: Wirelessly powered underwater display controlling its buoyancy

Abstract: This work proposes the wirelessly powered underwater display which can control its position by changing its own buoyancy. Evaluation on the proposed underwater wireless powering method is also presented.

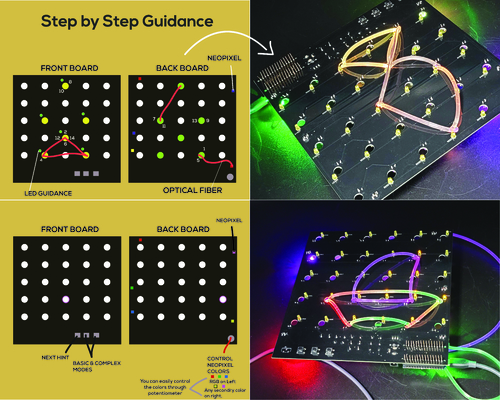

TIEboard: Developing Kids Geometric Thinking through Tangible User Interface

Abstract: TIEboard is a Tangible Interactive Educational toy inspired from traditional geoboard to enhance kids geometric learning experience with haptic feedback and guidance along the way.

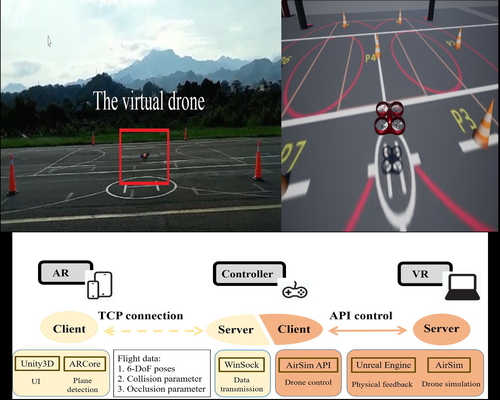

Training to Get a Drone License, Virtually

Abstract: We propose a drone flight training system (DFT MR) with a novel mixed reality (MR) technique to lower the risk of drone crashes for drone flight beginners and enhance their actual feelings of drone control. The proposed MR system integrates the advantages of both VR and AR by transmitting the high-fidelity simulated physical control feedback, such as obstacle collision and wind influence, from VR to the MR device. The system not only benefits those who have VR sickness but also provides the novice drone operators virtual crash-free drones for training to get their drone licenses.

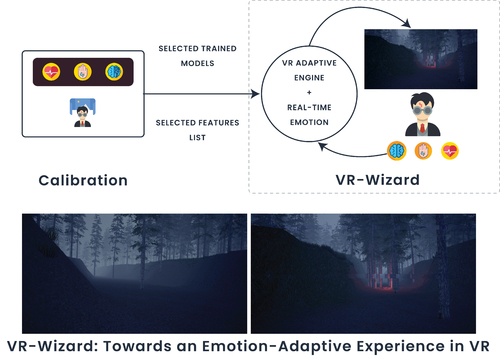

VR-Wizard: Towards an Emotion-Adaptive Experience in VR

Abstract: A research method to evaluate "WizardOfVR" and examine the effect of real-time biofeedback loop-based emotion adaptation in VR on the users' game experience, engagement, and flow experiences using physiological signals.

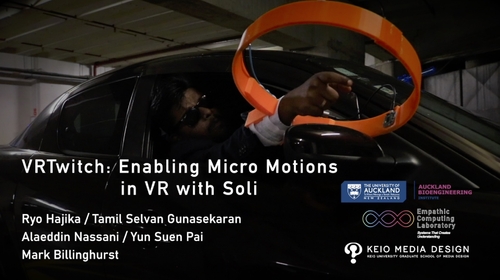

VRTwitch: Enabling Micro-motions in VR with Radar Sensing

Abstract: VRTwitch is a wearable device with an array of miniaturized radar sensors. We demonstrate a VR shooting experience with precise virtual gun maneuver by micro hand motions.

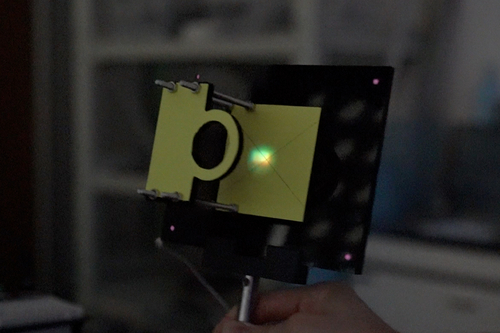

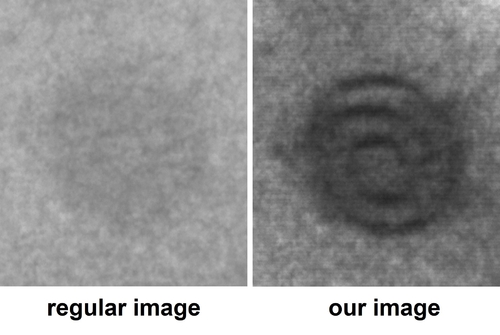

Visibility Enhancement for Transmissive Image using Synchronized Side-by-side Projector-Camera System

Abstract: In this paper, we describe a method to improve the visibility of the target object by capturing transmissive rays without scattering rays using a synchronized projector-camera system.

WAuth: Handwriting-Based Socially-Inclusive Authentication

Abstract: Inclusive group authentication system with a shared text that is uniquely identifiable for each user through their handwriting of a shared text.

eyemR-Talk: Using Speech to Visualise Shared MR Gaze Cues

Abstract: In this poster, we present eyemR-Talk, a Mixed Reality (MR) collaboration system that uses speech input to trigger shared gaze visualisations between remote users. The system uses 360° panoramic video to support collaboration between a local user in the real world in an Augmented Reality (AR) view and a remote collaborator in Virtual Reality (VR). Using specific speech phrases to turn on virtual gaze visualisations, the system enables contextual speech-gaze interaction between collaborators. The overall benefit is to achieve more natural gaze awareness, leading to better communication and more effective collaboration.