11. NPR and Digital Art [Q&A Session]

-

Full Access

Full Access

-

Onsite Student Access

Onsite Student Access

-

Virtual Full Access

Virtual Full Access

Date/Time: 06 – 17 December 2021

All presentations are available in the virtual platform on-demand.

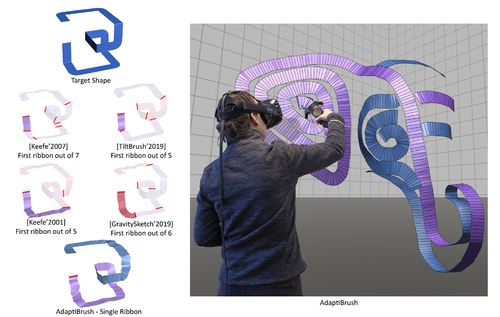

AdaptiBrush: Adaptive General and Predictable VR Ribbon Brush

Abstract: Virtual reality drawing applications let users draw 3D shapes using brushes that form ribbon shaped, or ruled-surface, strokes. Each ribbon is uniquely defined by its user-specified ruling length, path, and the ruling directions at each point along this path. Existing brushes use the trajectory of a handheld controller in 3D space as the ribbon path, and compute the ruling directions using a fixed mapping from a specific controller coordinate-frame axis. This fixed mapping forces users to rotate the controller and thus their wrists to change ribbon normal or ruling directions, and requires substantial physical effort to draw even medium complexity ribbons. Since human ability to rotate their wrists continuously is limited, the space of ribbon geometry users can comfortably draw using these brushes is limited. These brushes can be unpredictable, producing ribbons with unexpectedly varying width or flipped and wobbly normals in response to seemingly natural hand gestures. Our AdaptiBrush ribbon brush system dramatically extends the space of ribbon geometry users can comfortably draw while enabling users to accurately predict the ribbon shape that a given hand motion produces. We achieve this by introducing a novel adaptive ruling direction computation method, enabling users to easily change ribbon ruling and normal orientation using predominantly translational controller, and thus wrist, motion. We facilitate ease-of-use by computing predictable ruling directions that smoothly change in both world and controller coordinate systems, and facilitate ease-of-learning by prioritizing ruling directions which are well-aligned with one of the controller coordinate system axes. Our comparative user studies confirm that our more general and predictable ruling computation leads to significant improvements in brush usability and effectiveness compared to all prior brushes; in a head to head comparison users preferred AdaptiBrush over the next-best brush by a margin of 2 to 1.

Author(s)/Presenter(s):

Enrique Rosales, University of British Columbia, Universidad Panamericana, Canada

Chrystiano Araújo, University of British Columbia, Canada

Jafet Rodriguez, Universidad Panamericana, Mexico

Nicholas Vining, University of British Columbia, NVIDIA, Canada

Dongwook Yoon, University of British Columbia, Canada

Alla Sheffer, University of British Columbia, Canada

Multi-Class Inverted Stippling

Abstract: We introduce inverted stippling, a method to mimic an inversion technique used by artists when performing stippling. To this end, we extend Linde-Buzo-Gray (LBG) stippling to multi-class LBG (MLBG) stippling with multiple layers. MLBG stippling couples the layers stochastically to optimize for per-layer and global blue-noise properties. We propose a stipple-based filling method to generate solid color backgrounds for inverting areas. Our experiments demonstrate the effectiveness of MLBG; users prefer our inverted stippling results compared to traditional stipple renderings. In addition, we showcase MLBG with color stippling and dynamic multi-class blue-noise sampling, which is possible due to its support for temporal coherence.

Author(s)/Presenter(s):

Christoph Schulz, University of Stuttgart, Germany

Kin Chung Kwan, University of Konstanz, Germany

Michael Becher, University of Stuttgart, Germany

Daniel Baumgartner, University of Stuttgart, Germany

Guido Reina, University of Stuttgart, Germany

Oliver Deussen, University of Konstanz, Germany

Daniel Weiskopf, University of Stuttgart, Germany

Physically-based Feature Line Rendering

Abstract: Feature lines visualize the shape and structure of 3D objects, and are an essential component of many non-photorealistic rendering styles. Existing feature line rendering methods, however, are only able to render feature lines in limited contexts, such as on immediately visible surfaces or in specular reflections. We present a novel, path-based method for feature line rendering that allows for the accurate rendering of feature lines in the presence of complex physical phenomena such as glossy reflection, depth-of-field, and dispersion. Our key insight is that feature lines can be modeled as view-dependent light sources. These light sources can be sampled as a part of ordinary paths, and seamlessly integrate into existing physically-based rendering methods. We illustrate the effectiveness of our method in several real-world rendering scenarios with a variety of different physical phenomena.

Author(s)/Presenter(s):

Rex West, The University of Tokyo, Japan

Shading Rig: Dynamic Art-Directable Stylised Shading for 3D Characters

Abstract: Toon shading is notoriously difficult to control on dynamic 3D characters. Shading Rig lets artists control how stylised shading responds to lighting changes, and reproduces the art-directed shading in real-time.

Author(s)/Presenter(s):

Lohit Petikam, Victoria University of Wellington Computational Media Innovation Centre, New Zealand

Ken Anjyo, OLM Digital, Victoria University of Wellington Computational Media Innovation Centre, New Zealand

Taehyun Rhee, Victoria University of Wellington Computational Media Innovation Centre, New Zealand

SketchGNN: Semantic Sketch Segmentation with Graph Neural

Abstract: We introduce SketchGNN, a convolutional graph neural network for semantic segmentation of freehand vector sketches. We treat a sketch as a graph, with nodes representing the sampled points and edges encoding the stroke structure information.

Author(s)/Presenter(s):

Lumin Yang, Zhejiang University, China

Jiajie Zhuang, Zhejiang University, China

Hongbo Fu, City University of Hong Kong, Hong Kong

Xiangzhi Wei, Shanghai Jiao Tong University, China

Kun Zhou, Zhejiang University, China

Youyi Zheng, Zhejiang University, China