04. Computational Photography [Q&A Session]

-

Full Access

Full Access

-

Onsite Student Access

Onsite Student Access

-

Virtual Full Access

Virtual Full Access

Date/Time: 06 – 17 December 2021

All presentations are available in the virtual platform on-demand.

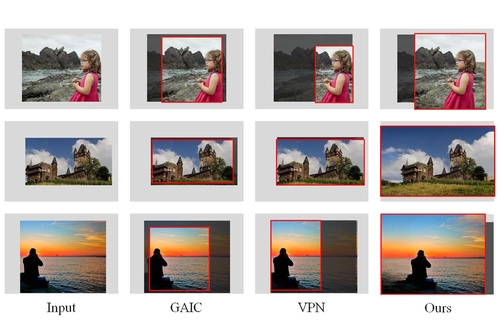

Aesthetic-guided Outward Image Cropping

Abstract: Image cropping is a commonly used post-processing operation for adjusting the scene composition of an input photography, therefore improving its aesthetics. Existing automatic image cropping methods are all bounded by the image border, thus have very limited freedom for aesthetics improvement if the original scene composition is far from ideal, e.g. the main object is too close to the image border. In this paper, we propose a novel, aesthetic-guided outward image cropping method. It can go beyond the image border to create a desirable composition that is unachievable using previous cropping methods. Our method first evaluates the input image to determine how much the content of the image should be extrapolated by a FOV evaluation model. We then synthesize the image content in the extrapolated region, and seek an optimal aesthetic crop within the expanded FOV, by jointly considering the aesthetics of the cropped view, and the local image quality of the extrapolated image content. Experimental results show that our method can generate more visually pleasing image composition in cases that are difficult for previous image cropping tools due to the border constraint, and can also automatically degrade to an inward method when high-quality image extrapolation is infeasible.

Author(s)/Presenter(s):

Lei Zhong, Nankai University, China

Feng-Heng Li, Nankai University, China

Hao-Zhi Huang, Xverse, China

Yong Zhang, Tencent AI Lab, China

Shao-Ping Lu, Nankai University, China

Jue Wang, Tencent AI Lab, China

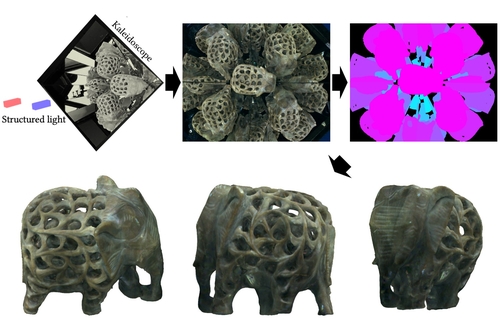

Kaleidoscopic Structured Light

Abstract: Full surround 3D imaging for shape acquisition is essential for generating digital replicas of real-world objects. Surrounding an object we seek to scan with a kaleidoscope, that is, a configuration of multiple planar mirrors, produces an image of the object that encodes information from a combinatorially large number of virtual viewpoints. This information is practically useful for the full surround 3D reconstruction of the object, but cannot be used directly, as we do not know what virtual viewpoint each image pixel corresponds---the pixel label. We introduce a structured light system that combines a projector and a camera with a kaleidoscope. We then prove that we can accurately determine the labels of projector and camera pixels, for arbitrary kaleidoscope configurations, using the projector-camera epipolar geometry. We use this result to show that our system can serve as a multi-view structured light system with hundreds of virtual projectors and cameras. This makes our system capable of scanning complex shapes precisely and with full coverage. We demonstrate the advantages of the kaleidoscopic structured light system by scanning objects that exhibit a large range of shapes and reflectances.

Author(s)/Presenter(s):

Byeongjoo Ahn, Carnegie Mellon University, United States of America

Ioannis Gkioulekas, Carnegie Mellon University, United States of America

Aswin C. Sankaranarayanan, Carnegie Mellon University, United States of America

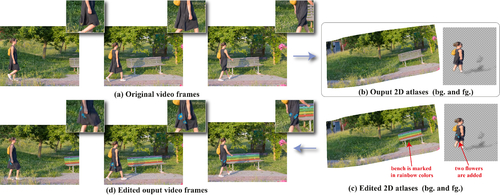

Layered Neural Atlases for Consistent Video Editing

Abstract: We present a method that decomposes, or “unwraps”, an input video into a set of layered 2D atlases, each providing a unified representation of the appearance of an object (or background) over the video. For each pixel in the video, our method estimates its corresponding 2D coordinate in each of the atlases, giving us a consistent parameterization of the video, along with an associated alpha (opacity) value. Importantly, we design our atlases to be interpretable and semantic, which facilitates easy and intuitive editing in the atlas domain, with minimal manual work required. Edits applied to a single 2D atlas (or input video frame) are automatically and consistently mapped back to the original video frames, while preserving occlusions, deformation, and other complex scene effects such as shadows and reflections. Our method employs a coordinate-based Multilayer Perceptron (MLP) representation for mappings, atlases, and alphas, which are jointly optimized on a per-video basis, using a combination of video reconstruction and regularization losses. By operating purely in 2D, our method does not require any prior 3D knowledge about scene geometry or camera poses, and can handle complex dynamic real world videos. We demonstrate various video editing applications, including texture mapping, video style transfer, image-to-video texture transfer, and segmentation/labeling propagation, all automatically produced by editing a single 2D atlas image.

Author(s)/Presenter(s):

Yoni Kasten, Weizmann Institute of Science, Israel

Dolev Ofri, Weizmann Institute of Science, Israel

Oliver Wang, Adobe Research, United States of America

Tali Dekel, Weizmann Institute of Science, Israel

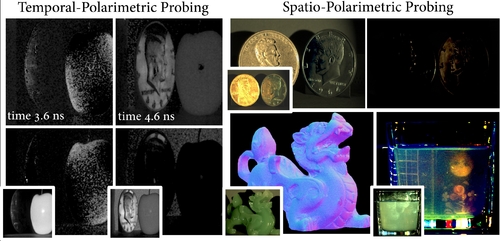

Polarimetric Spatio-Temporal Light Transport Probing

Abstract: Light emitted from a source into a scene can undergo complex interactions with multiple scene surfaces of different material types before being reflected towards a detector. During this transport, every surface reflection and propagation is encoded in the properties of the photons that ultimately reach the detector, including travel time, direction, intensity, wavelength and polarization. Conventional imaging systems capture intensity by integrating over all other dimensions of the incident light into a single quantity, hiding this rich scene information in the accumulated measurements. Existing methods are capable of untangling these measurements into their spatial and temporal dimensions, fueling geometric scene understanding tasks. However, examining polarimetric material properties jointly with geometric properties is an open challenge that could enable unprecedented capabilities beyond geometric scene understanding, allowing to incorporate material-dependent semantics and imaging through complex transport, such as macroscopic scattering. In this work, we close this gap, and propose a computational light-transport imaging method that captures the spatially- and temporally-resolved complete polarimetric response of a scene, which encode rich material properties. Our method hinges on a novel 7D tensor theory of light transport. We discover low-rank structures in the polarimetric tensor dimension and propose a data-driven rotating ellipsometry method that learns to exploit redundancy of the polarimetric structures. We instantiate our theory in two imaging prototypes: spatio-polarimetric imaging and coaxial temporal-polarimetric imaging. This allows us, for the first time, to decompose scene light transport into temporal, spatial, and complete polarimetric dimensions that unveil scene properties hidden to conventional methods. We validate the applicability of our method on diverse tasks, including shape reconstruction with subsurface scattering, seeing through scattering medium, untangling multi-bounce light transport, breaking metamerism with polarization, and spatio-polarimetric decomposition of crystals. The proposed method outperforms conventional methods in all experiments.

Author(s)/Presenter(s):

Seung-Hwan Baek, Princeton University, United States of America

Felix Heide, Polarimetric Spatio-Temporal Light Transport Probing, United States of America

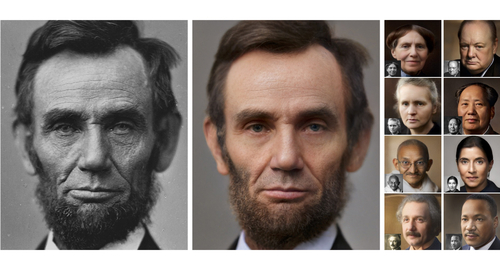

Time-Travel Rephotography

Abstract: Many historical people were only ever captured by old, faded, black and white photos, that are distorted due to the limitations of early cameras and the passage of time. This paper simulates traveling back in time with a modern camera to rephotograph famous subjects. Unlike conventional image restoration filters which apply independent operations like denoising, colorization, and superresolution, we leverage the StyleGAN2 framework to project old photos into the space of modern high-resolution photos, achieving all of these effects in a unified framework. A unique challenge with this approach is retaining the identity and pose of the subject in the original photo, while discarding the many artifacts frequently seen in low-quality antique photos. Our comparisons to current state-of-the-art restoration filters show significant improvements and compelling results for a variety of important historical people.

Author(s)/Presenter(s):

Xuan Luo, University of Washington, United States of America

Cecilia Zhang, Adobe Inc., University of California Berkeley, United States of America

Paul Yoo, University of Washington, United States of America

Ricardo Martin-Brualla, Google Research, United States of America

Jason Lawrence, Google Research, United States of America

Steven M. Seitz, University of Washington, Google Research, United States of America